Deep Cut

What happens when you add an editorial layer between a listener and a recommendation?

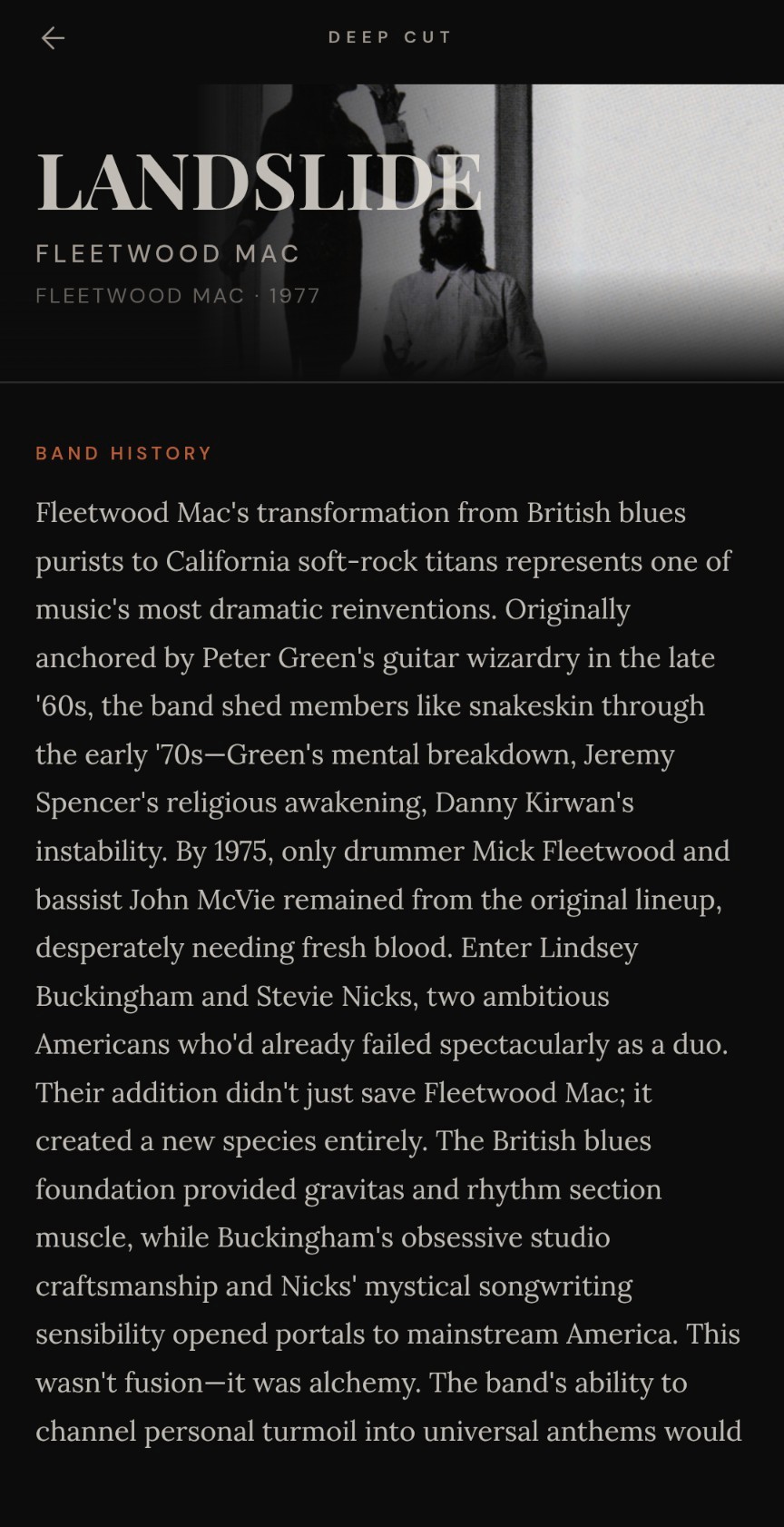

Deep Cut is a mobile-first music application that identifies any song playing in the room and generates a behind-the-scenes editorial on the spot: artist history, the story behind the track, critical reception, and deep lore. Then it invites you to dig deeper through recommendations designed to expand your taste.

Deep Cut is Live

Deep Cut is a working prototype in active development. Try it out on your phone. Identify a song that's playing then dig deeper.

The Concept

For music lovers, there’s a moment when you hear a song and something clicks. You need to know what it is, who made it, where it came from. Most products stop at identification. Deep Cut treats that moment of curiosity as the beginning of an experience, not the end of one.

After identifying a song, Deep Cut generates a piece of editorial content. Not a Wikipedia summary, but something closer to what you’d read in a great music magazine. Something with a voice. The artist’s history, the story behind the recording, critical reception, and a piece of trivia that reframes how you hear it.

Then Deep Cut surfaces three recommendations, each pulling in a different direction: a key influence, a contemporary in conversation with this song, and a surprising connection. Those categories are deliberate. They’re invitations to follow your curiosity.

Effortless Delivery vs Interest Expansion

Effortless personalization is behavior driven and often invisible. On the flip side, there is a more active personalization, often driven my someone's interest. Deep Cut takes this further by exploring an Interest Expansion model. The system gives you a foothold into a piece of content. That foothold creates new desire. The user ends the experience wanting something they didn’t know they wanted.

The Core Loop

User holds phone up and lets it listen to a song playing in the room

App records 7 seconds of ambient audio via the browser’s native MediaRecorder API

Audio is fingerprinted and matched via AudD

LLM generates a rich editorial narrative, streamed progressively to the screen

User reads a full editorial

Three curated recommendations invite the reader to dig deeper: a key influence, a song in conversation, and a surprising connection

Guided Demo

Home Screen

The original home screen label above the microphone button read "What's that song?" It was conversational and warm, and it mirrored what a user might actually be thinking in that moment. But it was solving the wrong problem.

The microphone button is self-explanatory — users understand intuitively that tapping it will identify the song. The real gap for a first-time user isn't recognition; it's expectation. They don't yet know that what's coming is a full editorial, not just a song title. "What's that song?" did nothing to set that expectation.

The revised label, "Hear a song. Read its story," addresses this directly. It frames the app's two-act experience in the simplest possible terms: something passive happens (you hear something), and something rich follows (you read about it). The verb "hear" puts the user in the right moment rather than framing identification as a task. And "read its story" signals that what's coming is worth reading, not just a lookup result.

Dig Deeper

The recommendation section went through several naming rounds. Early candidates like “Listen Further” were clear but flat. They described the function without connecting to the product’s identity. “Keep Digging” and “Below the Surface” pulled from the right metaphor but missed on tone: one felt like a command, the other read more like a label than an invitation. “B-Sides” had personality but positioned the recommendations as lesser content.

“Dig Deeper” stuck because it does three things at once. It extends the central metaphor that gives the product its name. It uses active language that invites rather than instructs. And it matches the editorial register of the rest of the experience.

Error States

The original error state had multiple problems. The headline and body copy contradicted each other, the body over-claimed about what the system technically knew had happened, and “Try again” appeared twice on the same screen.

The revision started with the headline. The goal was language that felt like a person, not a system. “Couldn’t place it” landed because it sounds like a record store employee or a friend leaning in to listen closer; someone genuinely trying and coming up short, not a process returning a null result. That framing set the tone for everything that followed.

The candidates:

Nothing came through. (honest, neutral)

No match found. (clean, unambiguous)

Lost the thread. (metaphorical, but contradicted by the original body copy)

We drew a blank. (human, a little self-deprecating)

Couldn’t place it. ✓ (casual, direct, in voice)

Voice & Tone

The first prototype validated the core functionality: identify a song, generate an editorial article. But the early output was inconsistent. Tone drifted between articles, and there was no systematic framework for how Deep Cut should sound across different types of music. The prototype worked. The voice didn’t yet.

So I stepped back and built a comprehensive Voice and Tone Guide that defines the product’s editorial voice, maps how tone adapts across genres and content surfaces, and provides concrete examples for every context a content designer would encounter.

The Voice:

Tone:

Deep Cut writes about Bon Iver and Kendrick Lamar, Miles Davis and Charli XCX. The voice stays constant, always knowledgeable, narrative-driven, specific, but the tone shifts to honor the music.

Each genre gets its own tonal profile: sentence texture guidance, vocabulary direction, cultural reference points, and Do/Don’t examples that make the distinction concrete. These profiles became the basis for revising the generation prompts, where genre-specific tone instructions are now passed to the model at generation time

Guardrails

The first version of the generation prompt said “specific details are always better than generalities” but gave no instruction for when the LLM didn’t know a specific detail. The implicit behavior was to hallucinate confident-sounding ones.

The clearest failure surfaced with cover songs. When the audio fingerprinting service returned a match on a cover rather than the original recording, the AI generated a full, fluent editorial attributing the song’s creation to the cover artist entirely. The writing was good. The premise was wrong.

The revised prompt made three targeted additions:

A pre-writing checkpoint: identify only what the AI is certain of before generating anything.

A cover song rule: always credit the original songwriter. Never describe a cover as if the performing artist wrote it.

Per-section accuracy constraints: Critical Reception got “Only cite publications you are certain existed. Do not fabricate or paraphrase review quotes.” Trivia and Deep Lore got “Every claim here must be grounded in something you actually know. A wrong specific detail is worse than no detail.”

The framing shift mattered as much as the specific rules. Rather than instructing the LLM to “be accurate,” the revised prompt gave it a journalist identity and tied precision to credibility.

Genre-Aware Tone

The Voice and Tone Guide wasn’t a reference document. It became an active component of the generation pipeline.

What changed:

A genre detection instruction: read the genre field from incoming metadata and adjust editorial register accordingly.

The Genre Tone Guide incorporated directly to the prompt as a structured reference — each entry specifying sentence texture, vocabulary, cultural references, and a Do/Don’t example pair.

A paragraph structure rule: two to four paragraphs per section, because dense single-block prose is a content design problem on a phone screen.

Evaluating the Prompt

I generated articles for the same songs using the before and after prompts. Assessed the outputs against the Voice and Tone Guide criteria: paragraph structure, genre tone, specificity, hagiography avoidance, and narrative drive. Then used a second LLM instance as a structured judge, scoring each article on those same five dimensions.

What the evaluation found:

Jazz: the tone shift was immediate and demonstrable. Sentence rhythm, vocabulary, and cultural reference all adapted visibly to the genre guidance.

Hip-hop: content quality improved meaningfully, but tonal adaptation was subtler.

Critical Reception sections were the most resistant to change across both genres, likely because reportorial writing has less room for tonal play than narrative sections.

The evaluation didn’t just confirm that the prompt worked. It identified where it didn’t, and why.

Designing for the Music Obsessive

Every color in the app is meant to evoke analog warmth.

The foundation is near-black ("void," #0C0C0C) and warm off-white ("cream," #EDE9E0). The contrast is high without the clinical sterility of pure black and white. The sole accent is ember (#B85C38), a burnt orange that appears on section labels, interactive states, and the logo.

Dig Deeper

The Recommendation Layer

The original Deep Cut ended abruptly: editorial content, then a button back to the listening room. A reader who'd just spent two minutes learning about a song's hidden history had nowhere to go next.

This was addressed by adding “Dig Deeper” which presents three recommendations at the bottom of every article, each pulling in a different direction. "Key Influence" points backward: a song you can hear in the DNA of the track. "In Conversation" points sideways: a contemporary working through the same idea from a different angle. "Surprising Connection" points across boundaries entirely: a shared producer, an uncredited session musician, an unlikely influence confession.

Instead of a "Surprising Connection" the first version of Dig Deeper recommended another song by the same artist. This was changed to surface what algorithms typically can't: shared producers, uncredited session musicians, unlikely influence confessions. The kind of knowledge that lives in liner notes, not listening patterns. In other words, a personalization more in line with the interest-driven approach at the core of Deep Cut.

Voice and Tone Design & Prompt Engineering

Voice and tone design can’t be separated from prompt engineering. The Voice and Tone Guide isn’t a reference document that sits alongside the product. It’s an active component of the generation system, directly shaping every piece of content the user reads.

Accuracy and Voice Are Separate Problems

This distinction matters for how the product should be built going forward. The prompt engineering work done to shape voice, register, and specificity is real and it works. But voice is not the same as factual reliability, and solving for one does not solve for the other. A future version of Deep Cut needs a fact-checking layer that is architecturally separate from the editorial layer so that the model's confidence in its writing doesn't paper over uncertainty in its sources.